Saving or persisting an object is actually not very complicated. The following code will do it.

EntityTransaction tx = entityManager.getTransaction();

tx.begin();

Concert c = new Concert("Peter");

entityManager.persist(c);

tx.commit();

entityManager.close();If you debug the code of the first example, you will see that entityManager.persist does not create an insert statement immediately but just selects the next sequence value. This is at least true, if you use a sequence as id generator.

It is the tx.commit() of the transaction, which leeds to sending the SQL insert statement to the database. Calling persist makes an object persistent. If the id is configured to be generated then Hibernate will ensure that it is generated and set the value of the id property. In addition the entity is added to the persistence context of the session.

Persistence Context

Simplified: the persistence context contains a map for each entity. The key of the map is the id and the value is a composite of the entity instance itself and the original values of all fields when the object was added to the persistence context. The latter allows Hibernate to determine if a persistent object needs to be updated in the database.

When the persistence context is flushed, Hibernate will send all insert statements to the database, determines all required updates by comparing the entities to the original field values and sends all updates to the database and finally all deletes are sent.

The call to commit will cause a flush of the persistence context before the commit is sent to the database.

There are multiple reasons for this behavior:

Less update statements

If you change multiple fields, add a relation while an entity is in persistent state, Hibernate will only send one update statement at the end.

JDBC batching

Hibernate can group updates and make use of JDBC batching. This is more efficient as compared to send statements one by one.

Reduced duration of locks

Sending an insert or update statement will cause a row or page lock depending on the database. Sending such statement at the end just before the transaction commit, will cause the lock to exist only for a short timespan. It reduces the risk of concurrent users waiting for locks or dead lock situations.

Therefor, Hibernate will do only the minimum required to determine the id of the entity and add the entity to the persistence context of the session. If the id is a generated value and the generator is a sequence or a table, Hibernate will just select the value. But if the id is generated on inserting - for example increment column in MS SQL server - then Hibernate needs to send the insert table to get an id generated.

Saving becomes more complex, when you start to use relations.

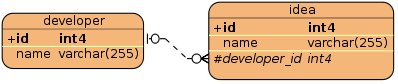

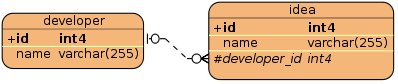

We are having the following tables mapped as 1:n relation. We will define that developer_id must not be null, an idea cannot exist without a developer. The database will create a not null constraint for the column developer_id.

Developer d = new Developer(); Idea idea = new Idea(); d.getIdeas().add(idea); idea.setDeveloper(d);

You must take care that the idea is not saved before the developer_id is defined. If you save the idea first, then the developer_id is not yet defined and you will receive a constraint violation exception.

Use case: no cascading defined

You must call save in the following order:

entityManager.persist(developer); entityManager.persist(idea);

Use case: cascading from developer to idea

You only need to call

entityManager.persist(developer);

Considerations

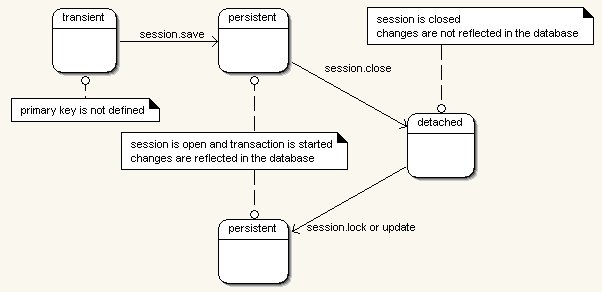

We talked in chapter Hibernate States Chapter 2, Hibernate Concepts - State of Objects about the states of an object. Below you can find the figure we used in that chapter.

Updating an entity requires that you get your object in persistent state. One approach is to fetch an object from the database within a transaction, apply your changes and commit the transaction.

|

EntityTransaction tx = entityManager.getTransaction();

tx.begin();

Country country = entityManager.find(Country.class, 4711);

country.setName("United Kingdom");

tx.commit();

entityManager.close();In the code above there is no merge. Working with Hibernate means working with objects and states. It is quite different from JDBC and sending insert or update SQL statements to the database.

Java Persistence has only one method to make an existing object persistent: merge.

Hibernate loads the object from the session or the cache or if it is not there from the database. Then it copies the values of your old object to the new object and returns the loaded object. This object is in persistent state. The old object is not attached to the session.

Pit fall using merge

Imagine you have a reference to a detached object. You want to reattach your object to a EntityManager and change the name to Peter. Do you think you have successfully changed the name?

EntityTransaction tx = entityManager.getTransaction();

tx.begin();

entityManager.merge(contact);

contact.setFirstName("Peter4");

tx.commit();You have not! The method merge looks up the object in the session. If it is not found, a new instance is loaded. Then the attributes of contact are copied to the found/created object. If you change contact it has no influence on the object in the session. You must apply your changes to the new object.

The solution is to assign the found/created object to the newInstance variable. Than you can access the variable directly.

Contact newInstance = entityManager.merge(contact);

newInstance.setFirstName("Peter4");An object can be removed by calling entityManager.remove.

EntityTransaction tx = entityManager.getTransaction(); tx.begin(); Contact merged = entityManager.merge(contact); entityManager.remove(merged); tx.commit(); entityManager.close();

There is a difference compared to Hibernate Session API. You need to make the object persistent before removing it. When the object was already deleted from the database before, you will encounter an exception.

org.hibernate.StaleStateException: Batch update returned unexpected row count from update: 0 actual row count: 0 expected: 1

Further attention is needed when relations are used. Let us reuse the example we took above for the update problem.

We will define that an idea can only exist with a developer. This means that developer_id must not be null.

In this case you cannot delete a developer without deleting the ideas first. We have two options to delete the objects.

Deletion without cascading

Reattach your developer, delete all the ideas first, and then delete the developer.

em = factory.createEntityManager();

em.getTransaction().begin();

paolo = em.merge(paolo);

for (Idea idea : paolo.getIdeas()) {

em.remove(idea);

}

em.remove(paolo);

em.getTransaction().commit();

em.close();Have a look at the test class of the relation mapping examples for further examples.

Cascading is explained in detailed in chapter Cascading the section called “Cascading”. Here, I present only a short description. If you have set cascading to delete or all you can just delete the developer and Hibernate will delete the ideas for you.

In this section, I will describe further commands provided by the EntityManager.

EntityManager.lock

Use entityManager.lock can be used to place a lock on an object in persistent state. This method behaves differently as Hibernate’s session, which makes an object persistent as well.

EntityManager em = factory.createEntityManager(); em.getTransaction().begin(); player = em.merge(player); em.lock(player, LockModeType.PESSIMISTIC_READ); em.getTransaction().commit(); em.close();

There are different options for the LockModeType:

LockModeType.NONE This is the default behaviour. If entites are cached, the cache is used. If a version column is present, the version is incremented and verified during the update SQL statement. You will find more details about @Version in chapter Optimistic Locking the section called “Optimistic Locking”. LockModeType.OPTIMISTIC The object is read from the database and not from the cache. If it was already read from the database, it will not be read again. If a version column is defined and the object was changed, then the version is incremented and verified during the update. This is the same as the JPA 1 option: LockModeType.READ LockModeType.OPTIMISTIC_FORCE_INCREMENT Same as OPTIMISTIC but will increment the version even if the object was unchanged. This is the same as the JPA 1 option: LockModeType.WRITE LockModeType.PESSIMISTIC_READ Reads the object from the database for shared usage. For a PostgreSQL database the SQL looks like select id from Player where id =? for share. LockModeType.PESSIMISTIC_WRITE Tries to create an update lock and throws an exception if this is not immediately possible. This is not supported by all databases. The object is read from the database for shared usage. For a PostgreSQL database the SQL looks like select id from Player where id =? for update. If a version column exists and the object was changed, the version is incremented and verified during the update.

I could not find a lock with no wait option as supported by the Hibernate Session API. The query hint to timeout the lock seems to be database dependent. It does not work with PostgreSQL, though it is supported by the database.

Map<String,Object> map = new HashMap<String, Object>();

map.put("javax.persistence.lock.timeout", 2);

em.find(Player.class, 1, LockModeType.PESSIMISTIC_WRITE, map);Note

There are bugs related to locking which were fixed with version 3.6 Final See https://hibernate.onjira.com/browse/HHH-5032

Reloading an entity

If a column of a table is calculated by a database trigger, it might be required to reload the object. A call to refresh will reread the object from the database.

em.refresh(developer);

An object in persistent state is stored in the persistence context and cannot be garbage collected even if it is no longer referenced from your code. Sometimes you want to limit the size of the session for example to keep memory consumption low. The command evict removes one object from the session. If the object was changed, the update statement is most likely not yet send to the database. If you do not want to loose your changes, then you should call flush to send all open changes to your database.

em.flush(); em.detach(developer);

We have to consider Hibernate behaviour when using detach. Hibernate does not write all changes to an object immediately to the database but tries to optimise the insert/update statements. All collected changes are written to the database, before you execute a query or if you commit a transaction.

The manual call to flush is only required in special use cases. By default the persistence context is for example flushed when you call commit and before the commit is actually sent to the database.